Microsoft Copilot Governance Playbook

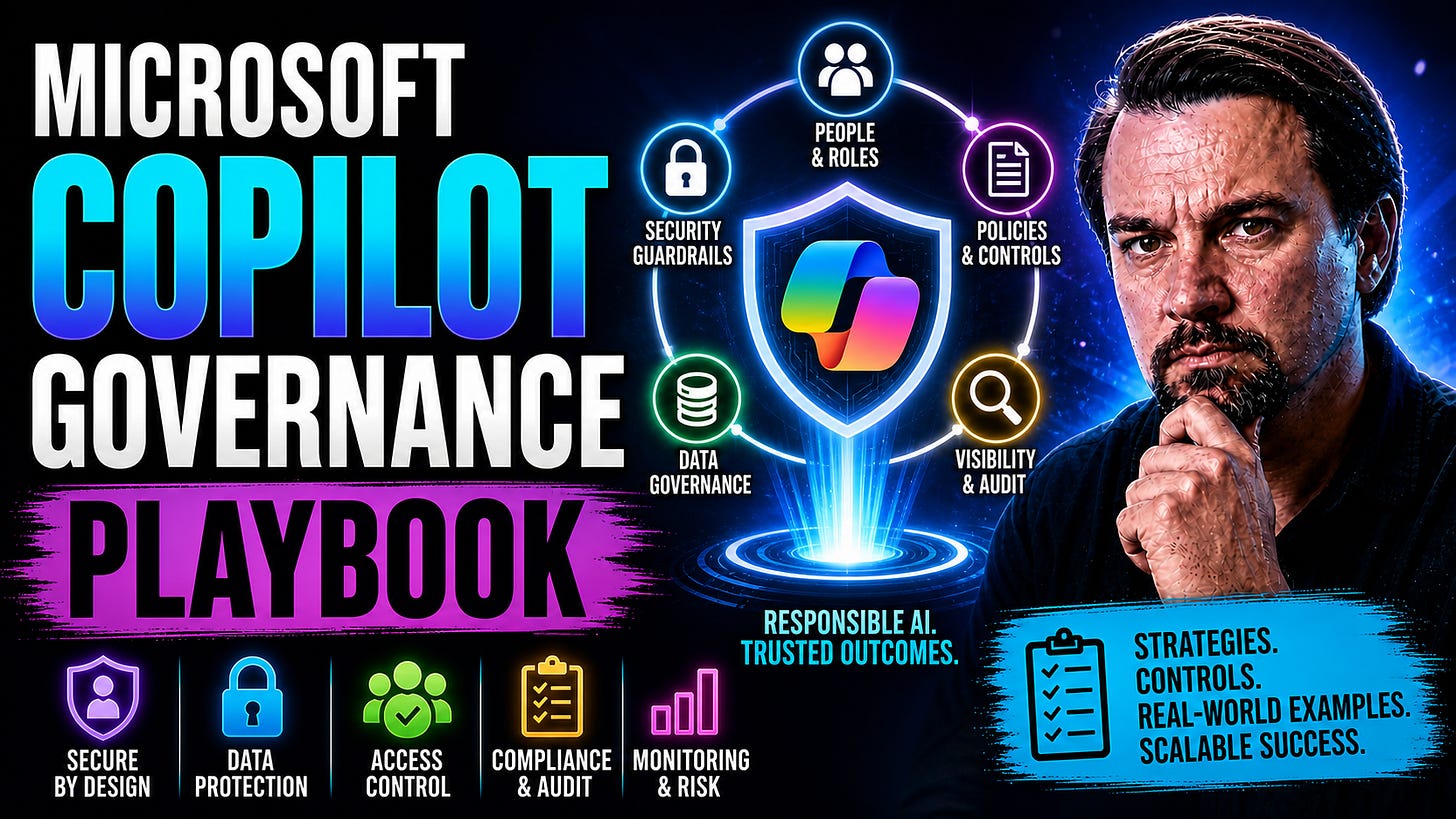

Master AI compliance with our Copilot Governance Playbook. Implement a robust governance strategy, sensitivity labels, and controls for your AI deployment.

“Every Copilot response is a compliance event—

governance is how you control the outcome.” - Mirko Peters

Microsoft Copilot Governance Playbook: The 2026 Strategic Guide

This playbook is your one-stop shop for building a Microsoft Copilot governance program that actually works. Weaving together proven strategies and real-world checklists, it gives you everything you need to make Copilot safe, compliant, and valuable for your business—whether you’re rolling it out to ten people or ten thousand. Here you’ll find practical frameworks: how to set up data protection, strong monitoring, and self-service models, all while making sure you don’t drown in red tape or lose sight of business agility.

I’m not here for puffed-up theory or copied-and-pasted Microsoft docs. Instead, this guide pulls from Microsoft’s own best practices, lessons learned from what competitors get wrong, and new approaches big organizations are using for Copilot and AI adoption. It steps through foundational principles, hands-on 90-day roadmaps, advanced Purview protection, KPI measurement, incident response, and much more, always grounded in what matters for U.S.-based organizations deploying Copilot in the wild world of Microsoft 365.

It’s structured to walk you through every major governance question. By the time you hit the end, you’ll have a full toolkit—principles, checklists, quick wins, deep dives, and expert resources. You’ll be overloaded with ways to not only launch Copilot safely, but keep it delivering the business outcomes (and staying on the right side of compliance) as you scale up for the future.

Microsoft Copilot Governance

Definition: Microsoft Copilot Governance refers to the policies, controls, roles, and processes that organizations put in place to manage the safe, compliant, and effective use of Microsoft Copilot — the AI-powered assistant integrated across Microsoft 365 and related services.

Short explanation: Effective governance for Microsoft Copilot addresses data privacy and residency, access and entitlement management, prompt and output oversight, security monitoring, compliance reporting, and change management. A practical microsoft copilot governance playbook codifies these elements into guidance, standards, and repeatable workflows so IT, security, legal, and business teams can enable Copilot while minimizing risks, ensuring regulatory compliance, and maximizing business value.

9 Surprising Facts About the Microsoft Copilot Governance Playbook

Policy enforcement can be automatic at the prompt level. The Microsoft Copilot Governance Playbook supports automated enforcement on user prompts and responses, allowing real‑time blocking or modification of queries that violate data protection or compliance rules.

It integrates with Microsoft Purview for data classification. Copilot governance leverages Purview classifications and sensitivity labels so policies apply based on actual data classification, not just user or app context.

Fine‑grained controls exist per scope and persona. You can define different governance policies by user group, tenant, team, or Copilot persona, enabling tailored restrictions for developers, HR, legal, and front‑line staff.

Built‑in prompt redaction and masking are available. The playbook includes capabilities to automatically redact or mask sensitive tokens (PII, account numbers, secrets) before content is sent to the model or returned to users.

It creates immutable audit trails for every interaction. Copilot governance can log prompts, responses, policy decisions, and redaction actions to support investigations, eDiscovery, and compliance audits without exposing full sensitive content.

Human review workflows are natively supported. Policies can route risky prompts or outputs into human‑in‑the‑loop review queues, integrating governance directly into business approval processes.

Governance can reduce data exfiltration risk even with external connectors. The playbook applies controls to data accessed via connected services (SharePoint, Teams, third‑party connectors), minimizing accidental leaks when Copilot reads or writes to external sources.

Continuous compliance scoring and alerts are included. The Microsoft Copilot Governance Playbook provides monitoring dashboards and scoring that surface drift, risky patterns, and policy violations so teams can remediate proactively.

Customization extends to model behavior via guardrails—not model retraining. Rather than retraining core models, the playbook uses policy layers, prompt transformation, and response filters to shape outputs, preserving model updates while enforcing enterprise rules.

Foundations of an Effective Microsoft Copilot Governance Strategy

Before you get deep into policies, tools, or technical enforcement, you need a strong foundation for Copilot governance. This means going beyond just IT controls—you’ll need executive sponsorship, cross-functional ownership, and a solid structure that sets the right tone from day one. The right foundation aligns everybody, keeps oversight tight, and unlocks Copilot’s potential without introducing wild new risks.

Building this base isn’t just about who “owns” Copilot or checking boxes for security. It’s about setting up core principles that embed trust, transparency, and accountability right up the chain. Getting your C-suite and line-of-business leaders on board is key, not just to avoid nasty surprises, but to ensure your AI transformation stays on target—helping you hit business, compliance, and productivity goals all at once.

This section walks you through the “why” and “what” of Copilot governance strategy—why these building blocks matter, what goes into them, and how they help you build a lasting Copilot program. Up next: how to lock in the critical principles and design a team structure that scales, so governance supports—not blocks—your most important work. If you want a real-life look at how governance boards help prevent AI mayhem, check out this podcast episode for insights on governance boards serving as the last defense against operational failures.

Effective Governance Principles and Executive Accountability

Define Core Governance Principles:Start by writing down the key values your Copilot program is built on—transparency, accountability, privacy, and operational consistency. These principles become your North Star, guiding policy decisions and helping teams know what’s expected as Copilot is rolled out enterprise-wide.

Secure Visible Executive Leadership:Put Copilot governance on the C-suite’s agenda. Having explicit executive sponsorship means leadership owns outcomes, commits resources, and breaks down silos. Executive visibility keeps governance from being an IT-only “project,” instead turning it into an enterprise priority.

Embed Cross-Functional Accountability:Assign clear roles and responsibilities across business, security, legal, and compliance. Make sure leaders in each function know what risks they own. This reduces confusion about who answers for security gaps or compliance hits, building a governance-minded culture from the top down.

Make Governance Everyone’s Business:No tool or policy can substitute a culture that takes governance seriously. Run awareness sessions, share regular updates, and reward behaviors that align with your principles. The goal: for every manager, analyst, and front-line worker to “own” data protection and policy upfront—not treat it like paperwork to avoid trouble later.

Standardize Control Mechanisms:Set up control planes for identity and access (think Entra ID), contractually bind Copilot usage to business risk tolerances, and automate enforcement as much as possible. For tips on how to tame the governance chaos of AI agents and avoid identity drift, check out this detailed guide.

Building a Copilot Governance Council and Lightweight Operating Model

Establish a Cross-Functional Council:Bring together stakeholders from IT, security, compliance, legal, HR, and business units. Each member represents their department’s unique risks, priorities, and use cases. This council acts as the nerve center, driving policy, escalation, and improvement cycles around Copilot governance.

Define Roles and Responsibilities:Specify decision rights—who approves rollouts, who monitors risk, who reviews escalations. Have named data stewards, AI product owners, security leads, and compliance officers, so nobody’s confused about who does what if issues arise.

Adopt a Lightweight Operating Model:Don’t turn governance into bureaucracy. Use agile meetings, real-time dashboards, and streamlined escalation paths. Focus on quick action and continuous feedback—avoid heavyweight approval chains that grind Copilot adoption to a halt.

Design Governance Architecture for Scale:Set up deterministic guardrails—like role-based access controls, auto-labeling, and automated documentation—so as you expand Copilot, policies stay enforceable. Learn from the world of Azure: detailed in this Azure governance deep-dive, successful governance starts when policies are enforced as code, not just written on paper.

Implement Continuous Improvement Mechanisms:Establish regular reviews of metrics, incident trends, and policy exceptions. Use lessons learned to adapt processes and make sure governance keeps up with Copilot’s evolution—never falling behind or drifting into chaos.

Key Benefits of a Microsoft Copilot Governance Strategy

The Microsoft Copilot Governance Playbook provides a structured approach to safely launch, manage, and scale Copilot across the enterprise. Key benefits include:

Risk reduction: Establishes policies and controls to minimize data leakage, compliance breaches, and inappropriate AI outputs.

Data protection: Defines data residency, classification, and access rules to ensure sensitive information is handled according to organizational standards.

Regulatory compliance: Aligns Copilot use with industry regulations and audit requirements through documented processes and evidence.

Consistent user experience: Standardizes deployment, configurations, and best practices so users receive predictable, supported Copilot behavior.

Accountability and governance: Creates clear roles, responsibilities, and approval workflows for Copilot provisioning, extensions, and exceptions.

Operational scalability: Provides templates and automation for onboarding, monitoring, and lifecycle management as adoption grows.

Security alignment: Integrates with existing identity, endpoint, and threat protection controls to maintain security posture.

Cost control: Enables policy-driven use and monitoring to prevent uncontrolled consumption and manage licensing effectively.

Change management and training: Includes guidance for communication, user training, and support to accelerate safe adoption.

Continuous improvement: Establishes metrics, feedback loops, and review processes to evolve governance in response to new features and risks.

Phased 90-Day Implementation Roadmap for Microsoft Copilot Governance

Rolling out Copilot isn’t something you want to do in a single leap. The most effective organizations use a stepwise, 90-day roadmap—modeled after the best of Microsoft’s advice and proven enterprise playbooks—to reduce risk, maximize learning, and keep governance in step with adoption.

This strategic approach walks you through each stage: assessment and boundary mapping, establishing the foundation, running a pilot, and then scaling with hardened controls. By moving through phases, you give your teams space to sanitize data, set up policies, train users, and validate controls without getting overwhelmed or making expensive missteps.

The key here is rhythm—steady progress, quick feedback, and continuous improvement. From scoping your data boundaries to training pilot champions and measuring your results, every stage comes with targeted goals and practical actions. If you want inspiration on why governed enablement and training matter for success, have a listen to this overview of Copilot learning centers that can anchor your deployment and keep the whole thing on track.

Phase 1: Assessment, Data Boundary Mapping, and Quick Access Hygiene Wins

Inventory Your Microsoft 365 Tenant:Catalog all the Teams, SharePoint sites, groups, and legacy repositories Copilot could touch. Identify where your most sensitive or regulated data lives—don’t just look at what’s active, but also the old, forgotten files that could be surfaced by AI.

Map Data Boundaries and Permissions:Draw a clear line around what kinds of data Copilot is (and is not) allowed to access. Create a map showing who can reach what, and where risky open sharing or “shadow IT” could be a threat. For tips, the episode on reigning in Shadow IT is a must-listen.

Quick Wins for Access Hygiene:Clean up legacy permissions, remove orphaned owners, and disable unaudited external sharing. These high-impact “fixes” drastically reduce exposure before Copilot ever gets access. Combine automated access reviews, policy cleanups, and templated guides for data owners.

Automate Where Possible:Use native tools like Entra ID activity logs, access review workflows, or PowerShell scripts to speed up the drudge work. Don’t waste precious hours clicking through each group by hand—leverage automation to scale. Need a governance-first perspective? Read up on the difference between access and ownership in this breakdown.

Document Pitfalls and Lessons Learned:Keep a shared log of common stumbles found during inventory—like duplicated Teams, forgotten guest users, or unrestricted sharing. These recurring issues need to be fixed early to prevent oversharing and accidental Copilot leaks later on.

Phase 2: Foundation and Policy Guardrails for Secure Copilot Adoption

Implement Strong Identity Controls:Set up Conditional Access to lock Copilot access behind trusted devices, secure authentication, and compliant users. Review existing policies for loopholes and tighten overbroad exclusions, as shown in this deep dive on Conditional Access policy gaps.

Fix Permissions and Misconfigurations:Check that user and app permissions match least-privilege principles. Review Graph API permissions and segment access so Copilot never sees data beyond the end user’s scope. A full guide can be found at keeping Copilot secure and compliant.

Enforce Policy Guardrails and Data Governance:Create policies for labeling, sharing, and DLP rules that Copilot must respect. Extend existing Purview or Defender controls, ensuring that any content surfaced is tracked, classified, and auditable across your ecosystem.

Baseline, Monitor, and Iterate:Develop a metrics baseline: track who is using Copilot, what data is accessed, and how often controls flag issues. Establish a quick feedback loop for ongoing policy refinement before a wider rollout.

Document and Communicate Changes:Keep stakeholders in the loop about what’s locked down and why. Transparency builds trust, shows due diligence, and helps business users adjust their workflows for the AI-powered future.

Phase 3: Enablement, Controlled Pilot, and Employee Training Best Practices

Launch with Champions, Not Just Test Users:Pick knowledgeable staff (“champions”) from different areas who will test-drive Copilot, challenge policies, and report pain points. The more realistic and diverse your pilot group, the more robust your feedback will be.

Deliver Targeted, Practical Training:Don’t dump users into generic e-learnings. Use real world examples, prompt libraries, and quick how-tos specific to your tenants and security requirements. Centralize resources as a Copilot Learning Center—here’s an episode on getting that right.

Monitor Early Usage and Guardrail Effectiveness:Set up analytics dashboards to measure: who’s using Copilot, what information it touches, and how often controls step in. Quickly remediate policy misses surfaced by pilot testing—better to patch now than after a data incident.

Iterate Based on Real Feedback:Collect structured feedback and user-reported roadblocks. Refine policies, tweak access controls, and adjust training materials before the floodgates open. Make “early complaints” your gold mine for continuous improvement.

Celebrate Quick Wins:Share success stories and metrics from your pilot—reduced help desk tickets, faster workflows, new governance guardrails. This builds buy-in beyond IT and paves the way for broader adoption with high user confidence.

Phase 4: Scaling, Hardening Controls, and Institutionalizing Governance

Expand Department-by-Department:Roll out Copilot access methodically—by business unit, job role, or project. This staged approach makes it easier to track adoption, spot anomalies, and fine-tune policies for different needs.

Harden and Automate Controls:Review DLP, access, and monitoring policies. Use auto-escalation, dynamic labeling, and alerting to catch risky use and misconfigurations at scale. For advanced enforcement tips, see extending compliance with Purview and Sentinel.

Institutionalize Feedback and Continuous Improvement:Build regular review cycles to capture lessons learned, spot process drift, and calibrate governance models. Encourage every department to surface problems and submit ideas for better AI adoption.

Track and Optimize Metrics:Monitor KPIs like active users, DLP alerts, incident response rates, and business outcomes. Use this data to justify license expansions, tighten security, and cut underused resources. Adaptive, data-led governance future-proofs your Copilot investment.

Reinforce Governance-Minded Culture:Highlight team contributions in making Copilot safe and effective. Use recognition, training refreshers, and transparent communications to bake governance into daily work instead of a one-time checklist. This keeps your Copilot program resilient through future changes and challenges.

Securing Copilot with Microsoft Purview Data Protection and Classification

Copilot will dig into your organization’s data, so robust protection is non-negotiable. Microsoft Purview is your best friend here—with its rich classification, labeling, DLP, and lifecycle controls, you can keep sensitive information locked down wherever Copilot goes.

This section zooms in on building an effective Purview strategy: how to use sensitivity labels at both the container and file level, set up DLP rules that block data leaks before they happen, and automate content lifecycle to keep your data environment clean and secure. These aren’t just “check the box” controls—they’re how you operationalize governance even as Copilot scales across dozens of teams and terabytes of files.

From regulatory audits to keeping wandering AI outputs in check, this section gives you the practical, step-by-step moves to label, monitor, and manage data risk at every stage. If you want a taste of how the pros blend DLP and agent governance, try this strategy-packed episode on Purview and Copilot agents.

Implementing Sensitivity Labels and Container-File Classification

Set Up a Tiered Label Taxonomy:Start by creating clear, intuitive label levels—like Public, Internal, Confidential, and Highly Confidential. Make sure these are easy for staff to understand and consistent across both files and containers (e.g., Teams, SharePoint sites, and M365 Groups).

Classify Both Containers and Files:Apply labels not just to documents, but to entire “containers.” This ensures that data inherits protection whether it’s a single file or a whole project site. If the whole container is sensitive, Copilot won’t accidentally surface high-risk data to users lacking clearance.

Automate Default Labeling:Use Microsoft Purview auto-labeling for shared drives and emails. Automate as much as possible—reduce the chances of users forgetting or misclassifying documents. For practical techniques on purview-driven content management, check out this Purview and SharePoint “document chaos” explainer.

Integrate Labeling with Employee Workflows:Embed labeling into daily processes, templates, and app defaults so end users don’t have to guess what to do. The easier you make it, the more consistently labels will be applied organization-wide.

Audit and Tweak Label Effectiveness:Review how well auto-labeling and policy inheritance are working. Use audit trails to spot blindspots—like unlabeled sensitive data or spread of “Highly Confidential” tags to low-risk content. Refine label definitions and rules as your environment evolves.

Configuring Purview DLP Policies and Output Governance

Establish Clear DLP Boundaries:Configure policies that block Copilot from exposing sensitive information outside defined parameters. Map critical content and set up targeted rules based on business needs, not one-size-fits-all templates. For guidance on setting up DLP, check this step-by-step resource.

Segregate Environments and Connectors:Create business and non-business boundaries in Power Platform and Microsoft 365 so that DLP rules stop data from “jumping” environments or use cases. This helps contain future Copilot integrations as your workplace grows.

Monitor Copilot Outputs for Compliance:Put in place systems to track what Copilot is serving up. Use audit logs and reports to catch unauthorized surfaces or unsafe data use, and remediate fast when red flags appear. For more on connector governance and avoiding the classic kitchen-sink mistake, listen to this discussion.

Map DLP Alerts to Incident Response:Connect DLP alerting to your Security Operations Center (SOC), so policy violations get triaged quickly. Your incident playbook should link alerts, contain potential data loss, and drive learning for future rule improvement.

Continuously Test and Optimize DLP:Review DLP rule hits, misses, and false positives. Adjust sensitivity and coverage to balance compliance without blocking legitimate workflows. This keeps security strong, without interrupting business productivity.

Automating Lifecycle and Access Management at Scale

Deploy Automated Retention Policies:Use Microsoft Purview to set custom retention and expiration for content—by type, sensitivity, and regulatory requirement. This ensures data is kept as long as needed and deleted when it’s not, shrinking your attack surface automatically.

Detect Orphaned or Stale Content:Leverage automation to flag content with missing owners, inactive users, or stagnant collaboration. Streamline review and clean-up so your data environment stays healthy, not cluttered with forgotten documents that Copilot could surface by mistake.

Simplify User Onboarding and Offboarding:Automate access provisioning and revocation. This prevents permission creep as employees change roles or leave—reducing exposure from “zombie” accounts and old projects still visible to Copilot.

Integrate Lifecycle Management into Apps:Embed content lifecycle triggers (like retention, archiving, or review requests) into your core Microsoft 365 experiences. Empower content owners to see, act, and verify status from where they work, not through a separate admin portal.

Iterate Based on Data Hygiene Metrics:Watch trends in stale content, ownership gaps, or retention failures. Use these metrics to drive governance tweaks and keep your Copilot program agile—even as usage scales and business needs change. (For more on automation, you can browse recent podcasts via this collection, even if the PowerShell page has moved.)

Microsoft Copilot Governance Playbook: Securing Copilot with Microsoft Purview — Checklist

Use this checklist to implement and validate Purview controls that secure Microsoft Copilot usage and data handling.

Preparation & Discovery

Inventory Copilot-integrated services and applications (Microsoft 365 apps, Teams, custom connectors)

Identify data sources Copilot can access (SharePoint, OneDrive, Exchange, Dataverse, external connectors)

Map data sensitivity labels and classification taxonomy relevant to Copilot scenarios

Confirm licensing and Purview capabilities required (sensitivity labeling, DLP, data lifecycle, audit)

Data Classification & Labeling

Define sensitivity labels specific to Copilot risk profiles (Public, Internal, Confidential, Highly Confidential)

Configure automatic and recommended labeling policies for data stores Copilot accesses

Test label propagation through connectors and document/content previews used by Copilot

Ensure labeled data includes restrictions (encryption, access, sharing conditions) aligned with Copilot use

Data Loss Prevention (DLP)

Create Purview DLP policies covering data at rest and in transit for Copilot workflows

Configure rules to block or restrict Copilot responses when content contains high-risk labels or patterns

Apply conditions for external sharing or connector-based prompts to prevent exfiltration

Enable policy tips and user education for DLP detections related to Copilot prompts

Access Controls & Entitlement Management

Enforce least privilege for Copilot service principals and connectors via Azure AD Conditional Access

Restrict Copilot access to scoped data sets using Purview sensitivity labels and access policies

Review and remove unnecessary admin or service permissions regularly

Implement Privileged Identity Management for high-risk roles interacting with Copilot

Content & Query Controls

Configure prompt filtering and content redaction for Copilot queries that reference sensitive labels

Block or obfuscate retrieval of sensitive documents in Copilot-generated responses

Set rules for telemetry and prompt logging to avoid storing sensitive content unprotected

Validate Copilot behavior against test prompts containing labeled sensitive data

Monitoring, Auditing & Alerts

Enable unified audit logging for Copilot interactions and Purview policy matches

Configure alerts for DLP violations, unusual Copilot data accesses, and policy overrides

Integrate Purview alerts with SIEM and SOAR for automated investigation and response

Create dashboards to track Copilot-related policy triggers, access patterns, and incidents

Retention, Records & Data Lifecycle

Apply retention and record management policies for Copilot-generated content and logs

Ensure deletion and archival processes honor sensitivity labels and legal holds

Validate that exported Copilot logs or transcripts are stored securely and access-controlled

Incident Response & Remediation

Include Copilot/Purview scenarios in incident response runbooks (data exposure, policy bypass)

Define steps to contain, investigate, and remediate Copilot-related data incidents

Prepare communication templates and compliance notifications for impacted stakeholders

Training, Communication & Governance

Train users on safe Copilot usage, prompt hygiene, and sensitivity labels

Publish governance policies covering Copilot usage, acceptable prompts, and enforcement

Assign a governance owner responsible for Copilot + Purview alignment and reviews

Review & Continuous Improvement

Schedule periodic reviews of Purview policies and Copilot integrations (quarterly recommended)

Run simulated prompts and red-team tests to validate protections remain effective

Update classification rules and DLP conditions as new Copilot features or connectors are introduced

Checklist optimized for “microsoft copilot governance playbook” — adapt items to your organization’s risk profile and compliance requirements.

Balancing Empowerment with Self-Service Governance in Copilot

Copilot can supercharge productivity—but only if users are empowered to use it responsibly. Tight control is important, but too much lock-down frustrates teams and stifles innovation. A modern governance model balances security guardrails with intuitive, safe self-service, building confidence and trust at every level.

This section introduces frameworks that let staff classify, share, and access content with the right guidance and technology backstops. It’s about setting up joint ownership, intuitive labeling, and seamless workflows—so people feel safe using Copilot and don’t try to work around controls out of desperation.

Building this trust also rests on training—using real scenarios, prompt libraries, and feedback processes that help reinforce desired behavior. The goal: responsible adoption that scales, boosts productivity, and leaves nobody behind. For extra tips, especially on nailing security without being a nuisance, see guidance on unbreakable M365 security that doesn’t annoy users.

Designing Secure Self-Service Models and Guardrails

Empower with Secure Defaults:Leverage intuitive defaults—like auto-labeling, access warnings, or micro-prompts—so employees can share, classify, and contribute without constant fear of “messing up.” Humans do better when safe choice is the easy choice.

Design Joint Ownership and Backstopping:Encourage teams to share responsibility for data—no single point of failure, no orphaned files. Set up automated reviews, shared checks, and escalation when sensitive content is re-shared or permissions change unexpectedly.

Integrate Flexible Guardrails (Not Rigid Walls):Balance risk with agility. Use just-in-time access, risk-based multi-factor authentication (MFA), and conditional policies so staff aren’t completely blocked during critical work but always “color inside the lines.” For frameworks on balancing Zero Trust without suffocating freedom, dive into this practical playbook.

Make Risk Management Digestible:Give users simple prompts—“Is this confidential? Should it be labeled?”—not 80-page training manuals. Raise risk awareness with just-in-time guidance, not heavy-handed policing.

Set Auditability and Escalation Paths:Every action in self-service should create a traceable event—ready for periodic review and fast escalation if guards are breached. That way, you lean into trust while keeping regulators and security teams happy.

Training Employees and Building a Governance-Minded Culture

Make Training Direct and Role-Based:Deliver targeted lessons using the actual workflows, apps, and scenarios staff hit every day. No generic AI “best practice” videos—give sales, operations, HR, and finance teams their own Copilot playbooks and risk alerts.

Leverage Governance Prompt Libraries:Equip users with “safe” prompt templates and usage examples—so they can get results fast, without stumbling into risky or noncompliant behaviors. Use governance libraries as living documents, regularly updated from real-world feedback.

Establish Ongoing Feedback Loops:Make it easy for employees to flag problems, suggest improvements, or report “weird” Copilot behaviors. Regular surveys, suggestion boxes, and office hours turn training into a two-way conversation.

Celebrate Governance Successes:Recognize teams or individuals who model secure Copilot use. Share wins and learnings from pilot programs—like ways centralized training centers cut help desk tickets, discussed in this best-practice case study.

Refresh and Reinforce Training:Don’t treat Copilot and AI training as “one and done.” Offer periodic refreshers, pop-up reminders, and spotlight new risks as Copilot evolves. Consistency is what turns awareness into culture.

Continuous Monitoring, Compliance, and Risk Mitigation in Copilot

Governance isn’t set-and-forget. For Copilot to stay safe and compliant, you need real-time monitoring, robust audit trails, and proactive compliance reviews—tools and processes working hand-in-hand. As Copilot usage grows, so does the potential for new risks, accidental oversharing, or compliance blind spots.

This section tackles how to keep an eye on everything Copilot touches: extracting rich activity logs, spotting oversharing before it hurts, and documenting data flows for auditors and security teams. We also dig into preparing for regulatory requirements—HIPAA, SOC 2, FedRAMP, and more—so you’re always ready for industry standards.

Finally, it’s about avoiding the “oh-no” moments—where trust erodes, policy drift sneaks in, or emerging risks spiral out of control. By operationalizing these tools and habits, you can move from defensive firefighting to active governance leadership. Don’t miss the deep dive on user activity auditing at this resource for maximizing audit trail value across Purview’s tiers.

Monitoring Copilot Usage, Oversharing Detection, and Audit Trails

Leverage Advanced Monitoring Tools:Use Microsoft Graph, Purview, and Sentinel to pull comprehensive logs of user and Copilot activities. These tools allow you to see who’s using Copilot, what data is being accessed, and where potential policy violations occur.

Detect Oversharing and Anomalies:Set up alerts for unusual sharing, unexpected permission shifts, or sudden spikes in data output. Automated dashboards flag risky behavior for quick review, preventing small leaks from becoming full-blown incidents.

Maintain Robust Audit Trails:Enable Purview Audit (preferably Premium for sensitive environments) to capture detailed user and agent activity for months or years, supporting compliance, forensics, and incident investigation. For tier details and step-by-step audit setup, check this walkthrough.

Extract and Analyze Usage Inventory:Build reports tracking Copilot utilization, DLP hits, and content access breakdowns. Use this data to fine-tune policies, justify license adjustments, and ensure resources align with real needs—not just org chart assumptions.

Foster a Culture of Accountability:Share transparent metrics with both leadership and staff. This not only builds trust, but also encourages positive habits and mutual responsibility for governance outcomes—making Copilot part of your “get stuff done, get stuff right” ethos.

Regulatory Compliance Gaps and Meeting Industry Standards

Map Regulatory Requirements to Copilot Policies:Identify which controls are critical for HIPAA, SOC 2, FedRAMP, or sector-specific audits. Develop a policy matrix that links each regulatory clause to a Copilot control, making sure you can prove compliance for every audit item.

Address Audit Readiness Proactively:Document evidence of control effectiveness—activity logs, access reviews, DLP alerts, and incident responses. Build automated scripts and “audit kits” showing how you maintain compliance year-round, not just during reviews.

Enforce Governance on Derived and AI-Generated Content:Don’t forget Copilot Notebooks and other derivative artifacts. These outputs must be classified and labeled by default, or you risk an ungoverned “shadow lake” exploding overnight—a challenge highlighted in this cautionary take.

Keep Pace with Changing Standards:Monitor changes in relevant laws (GDPR, CCPA, evolving US data rules) and regularly update your control deck. Stay in the loop with your industry’s compliance groups and sync governance policy refreshers as regulations evolve.

Prepare for Industry-Specific Audits:Tailor your documentation, controls, and communications to the jargon and focus areas auditors will bring. Being ready before the audit window opens means no late-night fire drills, and no embarrassing gaps dragged into the light.

Avoiding Failure Modes, Trust Erosion, and Emerging Risks

Spot and Patch Policy Drift:Monitor for exceptions, outdated controls, and discipline lapses. Unchecked drift results in compliance failures and, eventually, board-level headaches. Routinely review exceptions and insist on periodic governance “health checks.”

Guard Against Data Oversharing Amplifications:AI amplifies human slip-ups. If data isn’t locked down, Copilot can surface emails, files, or chats that never should see the light of day. Prioritize proactive permissions reviews and automate warnings around sensitive data exposure.

Maintain System-Level Ownership (Not Just Tool Governance):Don’t get trapped governing in app silos or “tool teams.” Own the entire M365 system’s lifecycle, identities, and automations—publicly and with teeth. More on rooting out fragmented ownership in this governance case study.

Identify and Address Emerging Risks Swiftly:From automation-bound agents outpacing controls to hallucinating AI outputs, every new Copilot feature will stress your governance. Test features in sandboxes, capture errors in real time, and retool governance every time new risks pop up—as discussed in this “Agentageddon” primer.

Prevent Trust Erosion with Fast, Transparent Remediation:Handle incidents swiftly, communicate openly, and make changes visible across the org. Every time users see risks addressed and feedback acted on, trust in Copilot (and in IT) grows stronger instead of weaker.

Advanced Copilot Architecture and Future-Proofing Governance

If you want Copilot to work seamlessly as workloads, apps, and user populations grow, you need to plan for governance at scale. That means moving beyond single-surface policies to centralized, orchestrated controls—so enforcement, monitoring, and compliance all keep pace with innovation across 80+ Microsoft 365 and third-party platforms.

This section explores how tools like Copilot Studio and advanced routing offer a powerful hub for governing AI interactions everywhere—not just in a handful of apps, but across the full digital estate. Think orchestrating data, policies, and workflows from a central control plane, with security baked in from day one.

It also walks through how to keep data protection and output quality rock-solid as Copilot becomes embedded in more business processes. If you want a direct look at marrying Purview-driven governance and agent lifecycle controls, don’t miss this masterclass on centralized Copilot agent management, and for a pragmatic take on separating the “experience” from the “governance” plane, see recommendations on safe AI agents in Microsoft environments.

Leveraging Copilot Studio and Centralized Routing for Scalable Governance

Centralize Policy Enforcement Via Copilot Studio:Use Copilot Studio as the single place to configure, update, and enforce governance controls across all Copilot integrations—spanning Teams, Outlook, SharePoint, Power Platform, and third-party connectors. This approach enables “one policy, 80+ surfaces”—driving consistency and cutting policy drift.

Orchestrate Advanced Routing Workflows:Design flows that guide Copilot requests through security, legal, or compliance gates, depending on data sensitivity and use case. Build reference models and approvals into the workflow—so risky actions are flagged, escalated, or blocked in real time when stakes are high.

Integrate Identity and Role Control Layers:Automate identity validation with Entra Agent IDs and role requirements, preventing agents or users from “drifting” into unintended authorities. This deters operational chaos when new AI integrations are added or legacy links change.

Automate Audit Logging Across All Touchpoints:Build audit hooks and standardized logging into every Copilot-powered interface. That way, every API call, human decision, and agent action is subject to continuous monitoring—no dead zones where things go untracked.

Test and Manage Policy Consistency at Scale:Frequently validate enforcement across platforms. Look for misconfigurations, missed updates, or siloed agents. For field-tested control-plane tips, jump to this discussion on scaling AI agent governance.

Ensuring Commercial Data Protection and Output Quality in Copilot

Understand Commercial Data Protection Commitments:Microsoft separates “enterprise” and “public” data—your business data never trains public models or leaves protected environments. Review commercial terms to know exactly what contractual controls govern Copilot use in your tenant.

Apply a Four-Part Output Quality Framework:Set expectations and rules for safe outputs: 1) enforce data classification on outputs, 2) require source attribution or links, 3) flag and proofread summaries/derivatives, and 4) escalate questionable or low-confidence generations for additional review.

Integrate Security Teams Throughout the Lifecycle:Pull in security to review not just initial deployments, but every new Copilot workflow and output channel. This ensures any new interfaces are pressure-tested for leaks and compliance gaps before hitting users.

Monitor Dashboards and Output Metrics:Use Power BI dashboards and custom metrics to track output quality, rule violations, and trends. If one Copilot surface starts spitting out unapproved data, you can isolate and remediate before it becomes a systemic risk.

Design Governance as a People-Process-Technology System:Remember, governance isn’t “automatic.” Native controls help, but policies, ownership, and intentional process design close the gaps. Practical advice on integrating the full system is available in this “governance illusion” breakdown.

Measuring Copilot Governance Effectiveness with KPIs and ROI Metrics

Rolling out Copilot isn’t enough—organizations need to prove it’s safe, compliant, and delivering real business value. That’s where measurement comes in. This section moves beyond “Did we implement that control?” and focuses on KPIs, metrics, and ROI models that demonstrate actual progress, effectiveness, and business impact to leadership.

We’ll look at how to measure adoption quality, policy adherence, and risk reduction. You’ll see how operational data (like DLP alert rates or labeled-data growth) ties directly to governance outcomes, as well as how to estimate cost savings, avoided remediation, and uplift in AI-enabled productivity. That kind of evidence turns governance from a sunk cost into a business-proven investment.

It also sets the stage for outcome-based accountability—tying progress, cost, and benefit into one clear value story. For background on why showback needs teeth to drive real change, check this episode on cost transparency versus accountability.

Defining Key Performance Indicators for Copilot Governance Outcomes

Reduction in Policy Violations:Track the number, frequency, and type of Copilot-related policy breaches (DLP, sharing, access) before and after each governance milestone. A declining trend signals effective policy and training interventions.

DLP Alert Resolution Time:Measure how quickly DLP and compliance alerts are identified, escalated, and resolved. Faster cycles mean risks are being caught and remediated, reducing your exposure window.

Labeled Data Volume Growth:Monitor how much of your data corpus is covered by meaningful sensitivity labels each quarter. Growth demonstrates stronger protection, reduced unclassified “dark” data, and successful self-service classification models.

User Compliance and Adoption Rates:Track user activity on governance steps: how many actively label, complete training, pass prompt usage reviews, and correctly escalate incidents. High participation reflects a well-embedded governance culture.

Operational Metrics Tied to Outcomes:Aggregate data such as audit findings, incident response trends, or help desk falls in Copilot-related tickets. Link these numbers back to governance activities for clear cause-and-effect storytelling. For integration and automation pointers, see this Power BI-driven compliance monitoring resource.

Calculating Governance ROI and Business Value

Calculate Cost Savings from Avoided Data Leaks:Use benchmarked industry costs for breach remediation and apply them to policy violation reductions to estimate dollar-value risk mitigated.

Estimate Reduced Remediation and Support Efforts:Measure the drop in incident response time, help desk volume, and time spent re-training. Quantify these labor savings directly in business value calculations.

Assess Uplift in Trusted AI Adoption:Track the correlation between governance maturity and employee/use case expansion—are more teams and users safely using Copilot over time?

Present Risk-Adjusted ROI Models to Leadership:Bundle direct spend, risk avoidance, and productivity gains into a simple, compelling business case that shows governance as a generator of enterprise value. For more, see the pitfalls of showback-only models in this expert discussion.

Cross-Functional Governance Enablement Beyond IT

IT can’t handle Copilot governance alone. Legal, compliance, HR, and business leaders each carry their own unique risks, obligations, and expertise—and all must be part of the program. This section sets up tailored guidance, making sure non-technical stakeholders know how to keep governance working long-term.

Role-Specific Playbooks for Legal and Compliance Stakeholders

Audit Readiness:Legal teams should develop checklists and maintain audit trails, ensuring documentation and evidence are always ready for regulatory or third-party reviews. Automated “audit kits” speed up the response and remove panic from last-minute requests.

Evidence Collection Protocols:Standardize log archiving, role-based access records, and versioning. Retain snapshots of policy state changes for future dispute resolution or compliance investigation.

Privacy Law Alignment (GDPR, CCPA):Link Copilot policies to specific clauses in relevant laws, and set up regular compliance gap scans. Practice runs and process documentation help legal quickly demonstrate adherence. For more practical steps, try this SharePoint and Power Apps governance guide.

Ongoing Documentation Processes:Schedule regular documentation updates to capture new Copilot capabilities, third-party integrations, or changes in legislative landscape.

Governance for HR Leaders and Ethical Talent Interactions

Govern AI in Recruitment:Set strict boundaries around how Copilot helps with screening, scoring, or communications to prevent algorithmic bias and privacy breaches in hiring decisions.

Control Copilot in Performance Reviews and Communications:Establish clear rules for generative AI use in review cycles and employee messaging, ensuring outputs are always reviewed by a human—especially when decisions affect promotions or pay.

Mitigate Bias and Preserve Trust:Use regular bias-audits and staff training so Copilot outputs never reinforce systemic bias or erode employee trust. Make it transparent when AI is used in people decisions and why.

Incident Response and Remediation for Copilot-Related Data Exposure

No governance plan is perfect—incidents will happen. The difference between a stressful failure and a minor event is how prepared you are to contain, investigate, and learn from data exposures related to Copilot. Most playbooks focus everything on prevention, but a robust response plan is a must for operational resilience.

This section lays out the steps for smart, fast triage—classifying incidents, assigning escalation paths, mobilizing responders, and integrating the right monitoring and containment tools. It also covers how to close the loop, making incident reviews a core part of your ongoing governance improvement. In-depth audit techniques with Purview are explained at this auditing guide.

Bottom line: what you do after a Copilot incident can transform risk into opportunity, driving stronger processes and building trust in both your AI systems and your ability to manage them well.

AI Incident Triage and Escalation Protocols

Define Incident Classification Schemes:Distinguish between true data leakage (sensitive content exposed to unauthorized users) and AI output errors (hallucinations, policy misses). Each type deserves its own escalation and remediation workflow.

Assign Clear Escalation Paths and Roles:Document who leads initial response, who notifies legal, and who invokes forensics. Predefine “hotlines” for high-severity leaks or public disclosures.

Integrate Forensic Tools for Rapid Response:Leverage Microsoft Purview Audit and Sentinel to quickly identify the who, what, and when of incidents. Fast evidence collection reduces impact and regulatory violations. Learn more in this step-by-step guide.

Initiate Immediate Response Actions:Contain exposure, reset permissions if needed, notify affected users or business leads, and prepare an incident report for post-mortem analysis.

Post-Incident Analysis and Closing the Governance Loop

Incident Review and Root Cause Analysis:Investigate what allowed the incident—whether a missed label, failed DLP rule, or a new Copilot use case. Map every incident to preventive actions.

Update Sensitivity Labels or DLP Policies:Revise labeling defaults and rule triggers in Purview based on what went wrong. Use hard lessons for proactive improvement, not just patchwork fixes.

User Retraining and Awareness:Reinforce secure behaviors and policy compliance with targeted training refreshers for those involved. Spread knowledge of lessons learned to prevent repeat incidents.

Document and Communicate Improvements: Maintain detailed records of incident analysis and policy changes. Share these outcomes broadly to build organizational trust in governance resilience.

Essential Resources, Checklists, and Expert Support

This last section gives you practical tools to maintain momentum—weekly checklists, progress trackers, and tips for tapping expert support as your Copilot journey continues to evolve. You’ll find simple templates and clear advice for when to call in help, plus guidance for using appendices, FAQs, and other supporting materials.

Don’t let your governance program get lost in the shuffle. Use these resources both as a project management best friend and as a safety net as Copilot keeps growing and the compliance landscape shifts underneath you.

Weekly Governance Checklist to Track Progress

Review access inventory and permissions for Copilot-reachable data.

Run automated DLP and sensitivity labeling scans; address new findings.

Audit Copilot usage metrics and adjust license allocations as needed.

Refresh employee training assets and communicate any policy changes.

Schedule regular reviews of audit logs, incident reports, and feedback submissions.

How to Engage Experts and Access Playbook Appendices

Consult Microsoft-certified Copilot or Purview experts for architecture and compliance questions.

Bring in third-party advisors for forensic investigations or regulatory gap analyses.

Use playbook appendices for template policies, sample incident reports, and FAQs covering tricky scenarios.

Tap regularly updated resource hubs for the latest Microsoft 365 governance tips and guides.

Connect with peer organizations to exchange best practices and lessons learned on Copilot rollouts.

copilot deployment and copilot rollout

What is a Microsoft Copilot governance playbook?

A Microsoft Copilot governance playbook is a governance framework and operational guide that defines governance requirements, roles for the governance team, controls and policies for Microsoft 365 Copilot and enterprise Copilot deployments to ensure security and compliance while enabling productive use of copilot for Microsoft 365.

How does copilot deployment relate to existing governance policies?

Copilot deployment should align with your existing governance policies, including data loss prevention policies, Microsoft Purview information protection, and content governance rules so that copilot inherits the governance posture and respects access controls and compliance requirements across the entire Microsoft 365 environment.

Who should be on the governance team for Microsoft 365 Copilot?

A governance team should include stakeholders from IT, security and compliance, legal, privacy, HR and business leadership to set governance controls, run a copilot readiness assessment, manage copilot license assignments and oversee the governance rollout and ongoing monitoring.

What is a copilot readiness assessment?

A copilot readiness assessment evaluates technical preparedness (Microsoft 365 permissions, Microsoft Azure configuration, Power Platform admin center settings), data classification, DLP coverage, content governance gaps and user training needs to ensure copilot will be deployed safely and in compliance with governance matters.

How do you control what Copilot can reference?

Use governance controls such as Microsoft Purview information protection labels, access control lists, data loss prevention policies and permission scoping in the Microsoft 365 admin center and Microsoft Azure to limit the content that Copilot can reference and ensure Copilot respects access and privacy requirements.

Will Copilot surface sensitive data like social security numbers?

Copilot should not surface sensitive data when proper governance controls are in place; configure Microsoft Purview information protection, DLP policies and content governance to detect and block social security numbers and other regulated identifiers so copilot cannot surface that content during responses.

Does Copilot inherit Microsoft 365 permissions?

Yes, Copilot inherits Microsoft 365 permissions and respects access boundaries: it can only surface content and data that the requesting user is authorized to access, provided governance and permission settings are correctly configured.

What governance controls prevent Copilot from amplifying privileged data?

Robust governance includes role-based access control, data loss prevention policies, Purview classification, tenant-level settings in the Microsoft 365 admin center and conditional access in Microsoft Azure to prevent copilot from amplifying or exposing privileged data beyond authorized users.

How do I deploy Microsoft Copilot while ensuring good governance?

Follow a staged copilot rollout: run a copilot readiness assessment, pilot with a limited group, implement governance framework elements (DLP, Purview, access controls), refine governance posture and then scale deployment, ensuring the governance team monitors usage and compliance continuously.

Can Copilot be used with Microsoft Teams and Power Platform?

Yes, Copilot can integrate with Microsoft Teams and Power Platform; governance must include Power Platform admin center controls, Teams data governance and connectors restrictions so Copilot within those services adheres to your governance model and security and compliance requirements.

What is content governance for Copilot?

Content governance covers how data is classified, stored and exposed to Copilot, including policies for retention, labeling with Microsoft Purview information protection, and rules that determine what copilot will surface from SharePoint, OneDrive, Teams and other content sources across the tenant.

How do I ensure Copilot respects access and privacy?

Ensure Copilot respects access by enforcing Microsoft 365 permissions, Purview labels and encryption, applying DLP policies, and validating that copilot cannot surface restricted content via tests and audits; governance policies should document and enforce those controls.

What are common governance issues during a copilot rollout?

Common issues include incomplete DLP coverage, misclassified sensitive data, improper permissions, lack of power platform controls, insufficient training causing misuse, and unclear governance requirements—address these with a governance framework and continuous monitoring.

How should licenses be handled when deploying Copilot?

Manage copilot license allocation through the Microsoft 365 admin center by mapping licenses to user roles, budgeting for copilot license costs, and ensuring license assignment aligns with your governance and compliance policies and the planned copilot rollout.

Can Copilot be configured to avoid referencing specific content sources?

Yes — you can control what Copilot can reference by restricting connectors, adjusting index and search scope, applying site- and label-based exclusions in Microsoft Purview and the SharePoint/OneDrive settings so copilot will not surface content that copilot cannot reference under your governance rules.

How does Microsoft Purview information protection support Copilot governance?

Microsoft Purview information protection provides classification, labeling and encryption that help enforce content governance, drive data loss prevention policies, and ensure copilot respects sensitive labels so the copilot cannot surface or store protected information outside allowed contexts.

Does Copilot know and respect tenant-level governance posture?

Copilot operates within tenant-level governance posture when configurations are correctly applied—permissions, Purview policies, DLP, conditional access and tenant settings in Microsoft Azure and the Microsoft 365 admin center ensure Copilot knows and follows governance constraints.

What monitoring and audit capabilities support Copilot governance?

Use Microsoft 365 audit logs, Purview activity explorer, Azure AD sign-in and conditional access logs, and custom telemetry to detect anomalies, verify that copilot will surface appropriate content, and maintain records for security and compliance reviews as part of your governance rollout.

How do I manage risk that Copilot might generate incorrect or biased outputs?

Mitigate that risk with training, usage policies, human review workflows, guardrails in the governance framework, testing during the copilot readiness assessment, and by controlling the sources copilot can reference so outputs are based on validated enterprise content.

Can I limit Copilot to only work with certain business units or data zones?

Yes; implement scoped copilot deployment, partitioning via Azure AD groups, SharePoint site permissions, label-based access and conditional connectors so Copilot within the enterprise copilot deployment only interacts with designated business units or data zones.

How do governance requirements affect the speed of Copilot rollout?

Stronger governance requirements typically slow rollout because they require classification, policy configuration, testing and stakeholder alignment; however, planning a phased rollout with a readiness assessment balances speed and robust governance for a safer deployment.

What are best practices for user training and adoption for Copilot?

Provide role-based training, clear documentation on governance policies, example use cases, guidance on what copilot can reference, and mechanisms to report issues; training ensures employees use Copilot effectively while respecting security and compliance rules.

How do data loss prevention policies interact with Copilot features?

Data loss prevention policies intercept and block or audit attempts to share or surface sensitive data; when applied across Microsoft 365, DLP policies ensure Copilot inherits those protections and will not expose content that violates data loss prevention rules.

What should be included in a governance model for enterprise Copilot?

A governance model should include roles and responsibilities, technical controls (Purview, DLP, Azure AD), deployment and rollback procedures, monitoring and auditing, training plans, license management, and a governance rollout plan defining stages and success criteria.

How can I ensure Copilot will surface relevant, compliant information?

Ensure Copilot will surface appropriate information by curating sources, applying Purview labeling, tightening Microsoft 365 permissions, implementing DLP policies, and continuously validating outputs through audits and user feedback loops managed by the governance team.

What steps help maintain a robust governance posture after deployment?

Maintain robust governance with continuous monitoring, periodic copilot readiness re-assessments, updates to governance policies, regular audit reviews, user training refreshers, and incident response plans so governance matters remain addressed as Copilot evolves.

How does deploying Microsoft Copilot affect existing security and compliance programs?

Deploying Microsoft Copilot extends existing security and compliance programs by introducing new data interaction vectors; integrate copilot into your security and compliance processes, update policies in Microsoft Purview and the Microsoft 365 admin center, and coordinate with Azure security controls to manage risk.

Can I run Copilot without giving it access to all enterprise content?

Yes; you can deploy copilot without broad access by scoping sources, applying strict permissions, using site- and label-based exclusions, and limiting connectors so copilot without access to sensitive repositories will only use permitted content for responses.